Fortescue Metals takes control of Nullagine Iron Ore Joint Venture for $1

Fortescue Metals Group has today announced that it has agreed to acquire BC Iron’s 75 per cent interest in the Nullagine Iron Ore Joint Venture for the grand total of A$1.

As part of the deal, Fortescue will inherit all assets and rehabilitation obligations of the venture while paying a royalty from sales of future mined iron ore.

Nev Power, Chief Executive Officer at Fortescue, said: “We have enjoyed a strong working relationship with BC Iron through the life of the Nullagine Joint Venture and believe this is a positive outcome for both companies.

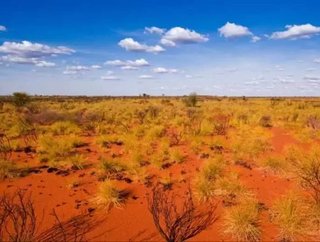

Located north of Newman in the Pilbara region of Western Australia, BC Iron was the operate and manager of the joint venture, while Fortescu provided rail haulage and port services.

There is a capacity to export up to 6Mtpa of iron ore on the rail and port infrastructure put inplace through Fortescue.

The Nullagine mine was closed in December 2015 as a result of weak iron ore prices, though it was in full operation for a strong five years prior.

Nullagine is known for its extensive mesas, iron mineralisation in channel iron deposits.

BC Iron ltd

Formed in 2006, the exploration company transformed into a producer soon after.

The ASX listed iron ore mining and development company has assets in the Pilbara region of Western Australia. These include Nullagine (now wholly owned by Fortescue), Iron Valley and Buckland.

Iron Valley, located in Central Pilbara, is operated by Mineral Resources through an iron ore sales agreement. Buckland is more of a potential development project, with a feasibility study showing Ore Reserves of 134.3 Mt.

Fortescue Metals, a global leader in the iron ore industry, was founded in 2003. The Western Australian company owns some of the most significant iron ore mining operations in the world.

These include the Chichester Hub and the Soloman Hub, and will now include 100 percent ownership of Nullagine.

Fortescue metals can boast a production of 165 million tonnes of iron ore per annum. Quite a feat.

Looking ahead

Fortescue is currently assessing the prospects of restarting operations at Nullagine following the acquisition.

“We will review operations over the coming months to determine the best path forward, taking into account all relevant factors including market demand and other potential opportunities to extract value from the assets,” Mr Power said.

The October issue of Mining Global Magazine is live!

Follow @MiningGlobal

Get in touch with our editor Dale Benton at [email protected]

- Fortescue targets green hydrogen production in BrazilSustainability

- Fortescue strengthens target for carbon neutrality by 2030Sustainability

- Fortescue-EY report shows iron ore driving Australia's economySupply Chain & Operations

- Weir wins $125mn machinery order for Fortescue Metals iron ore projectDigital Mining